介绍

人工神经网络的灵感来自我们的大脑。遗传算法受到进化的启发。本文提出了一种新型的辅助训练的神经网络:遗传神经网络。这些神经网络具有适应度等特性,并使用遗传算法训练随机生成的权重。遗传优化发生在任何形式的反向传播之前,以给梯度下降提供一个更好的起点。序列神经网络

序列神经网络接受一个输入矩阵,在模型外部与一个真实输出值的向量配对。然后通过遍历每一层,通过权重和启用函式来变换矩阵。

这是一个序列神经网络,具有一个输入矩阵,两个隐藏层,一个输出层,三个权重矩阵和一种启用函式。

训练算法

最初的预测很可能是不准确的,所以为了训练一个序列神经网络做出更好的预测,我们把它看作一个复合函式。

建立一个损失函式,输入矩阵和真实输出向量(X和y)保持不变。

现在所有的东西都是关于函式的,并且有一个明确的目标(最小化损失),我们得到一个多变数微积分的优化问题。

随着模型显示出越来越多的复杂性,梯度下降的计算成本可能变得非常昂贵。遗传神经网络提供了一个可供选择的初始训练过程,以提供一个更好的起点,在反向传播过程中允许更少的epochs。

遗传神经网络

在遗传神经网络中,网络被视为具有fields和适应度的计算物件。这些fields被认为是在反向传播之前通过遗传算法优化的基因。这使得梯度下降具有更好的起始位置,并且允许更少的训练时间,并具有更高的模型测试准确度。考虑以下遗传神经网络,其中权重被视为计算物件中的fields。

这些fields是相对于遗传神经网络的每个例项的基因。就像序列神经网络一样,它可以表示为复合函式。

然而,在使用微积分之前,我们将使用遗传算法采取进化方法来优化权重。

遗传算法

在自然界中,染色体交叉看起来是这样的…

如果我们把染色体简化成块…

这与遗传算法用于改变权重矩阵的逻辑相同。这个想法将是建立一个初始种群的n个遗传神经网络,经过正向传播计算出一个适应度得分,最后选择最适合的个体来建立孩子。这个过程将重复,直到找到最优的初始权值进行反向传播。

应用遗传神经网络

首先,我们必须建立遗传神经网络。我们使用的是具有四个输入节点,两个隐藏层和一个输出层的训练模型(以匹配上图),这可以扩充套件到任何型别的神经网络。import pandas as pd

import numpy as np

import random

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

from keras.models import Sequential

from keras.layers import Dense

# New Type of Neural Network

class GeneticNeuralNetwork(Sequential):

# Constructor

def __init__(self, child_weights=None):

# Initialize Sequential Model Super Class

super().__init__()

# If no weights provided randomly generate them

if child_weights is None:

# Layers are created and randomly generated

layer1 = Dense(4, input_shape=(4,), activation='sigmoid')

layer2 = Dense(2, activation='sigmoid')

layer3 = Dense(1, activation='sigmoid')

# Layers are added to the model

self.add(layer1)

self.add(layer2)

self.add(layer3)

# If weights are provided set them within the layers

else:

# Set weights within the layers

self.add(

Dense(

4,

input_shape=(4,),

activation='sigmoid',

weights=[child_weights[0], np.zeros(4)])

)

self.add(

Dense(

2,

activation='sigmoid',

weights=[child_weights[1], np.zeros(2)])

)

self.add(

Dense(

1,

activation='sigmoid',

weights=[child_weights[2], np.zeros(1)])

)

# Function for forward propagating a row vector of a matrix

def forward_propagation(self, X_train, y_train):

# Forward propagation

y_hat = self.predict(X_train.values)

# Compute fitness score

self.fitness = accuracy_score(y_train, y_hat.round())

# Standard Backpropagation

def compile_train(self, epochs):

self.compile(

optimizer='rmsprop',

loss='binary_crossentropy',

metrics=['accuracy']

)

self.fit(X_train.values, y_train.values, epochs=epochs)

现在我们已经建立了遗传神经网络,我们可以开发出一种交叉算法。我们将使用类似于上面给出的生物图示的单点交叉。每一个矩阵列都有相同的机会被选择为一个交叉点,让每一个父母组合他们的基因并将它们传递给孩子。

# Crossover traits between two Genetic Neural Networks

def dynamic_crossover(nn1, nn2):

# Lists for respective weights

nn1_weights = []

nn2_weights = []

child_weights = []

# Get all weights from all layers in the first network

for layer in nn1.layers:

nn1_weights.append(layer.get_weights()[0])

# Get all weights from all layers in the second network

for layer in nn2.layers:

nn2_weights.append(layer.get_weights()[0])

# Iterate through all weights from all layers for crossover

for i in range(0, len(nn1_weights)):

# Get single point to split the matrix in parents based on # of cols

split = random.randint(0, np.shape(nn1_weights[i])[1]-1)

# Iterate through after a single point and set the remaing cols to nn_2

for j in range(split, np.shape(nn1_weights[i])[1]-1):

nn1_weights[i][:, j] = nn2_weights[i][:, j]

# After crossover add weights to child

child_weights.append(nn1_weights[i])

# Add a chance for mutation

mutation(child_weights)

# Create and return child object

child = GeneticNeuralNetwork(child_weights)

return child

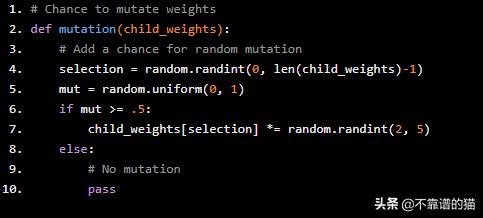

为了确保种群探索解空间,应该会发生突变。在这种情况下,因为解空间非常大,突变的概率显著高于大多数其他遗传算法。没有特定的方法来改变矩阵,我们在矩阵上随机执行标量乘法,幅度为2-5。

# Chance to mutate weights

def mutation(child_weights):

# Add a chance for random mutation

selection = random.randint(0, len(child_weights)-1)

mut = random.uniform(0, 1)

if mut >= .5:

child_weights[selection] *= random.randint(2, 5)

else:

# No mutation

pass

最后,模拟遗传神经网络的演化。我们需要网络资料来学习,因此我们将使用众所周知的 banknote机器学习资料集。

# Read Data

data = pd.read_csv('banknote.csv')

# Create Matrix of Independent Variables

X = data.drop(['Y'], axis=1)

# Create Vector of Dependent Variable

y = data['Y']

# Create a Train Test Split for Genetic Optimization

X_train, X_test, y_train, y_test = train_test_split(X, y)

# Create a List of all active GeneticNeuralNetworks

networks = []

pool = []

# Track Generations

generation = 0

# Initial Population

n = 20

# Generate n randomly weighted neural networks

for i in range(0, n):

networks.append(GeneticNeuralNetwork())

# Cache Max Fitness

max_fitness = 0

# Max Fitness Weights

optimal_weights = []

# Evolution Loop

while max_fitness # Log the current generation

generation += 1

print('Generation: ', generation)

# Forward propagate the neural networks to compute a fitness score

for nn in networks:

# Propagate to calculate fitness score

nn.forward_propagation(X_train, y_train)

# Add to pool after calculating fitness

pool.append(nn)

# Clear for propagation of next children

networks.clear()

# Sort based on fitness

pool = sorted(pool, key=lambda x: x.fitness)

pool.reverse()

# Find Max Fitness and Log Associated Weights

for i in range(0, len(pool)):

# If there is a new max fitness among the population

if pool[i].fitness > max_fitness:

max_fitness = pool[i].fitness

print('Max Fitness: ', max_fitness)

# Reset optimal_weights

optimal_weights = []

# Iterate through all layers, get weights, and append to optimal

for layer in pool[i].layers:

optimal_weights.append(layer.get_weights()[0])

print(optimal_weights)

# Crossover, top 5 randomly select 2 partners for child

for i in range(0, 5):

for j in range(0, 2):

# Create a child and add to networks

temp = dynamic_crossover(pool[i], random.choice(pool))

# Add to networks to calculate fitness score next iteration

networks.append(temp)

# Create a Genetic Neural Network with optimal initial weights

gnn = GeneticNeuralNetwork(optimal_weights)

gnn.compile_train(10)

# Test the Genetic Neural Network Out of Sample

y_hat = gnn.predict(X_test.values)

print('Test Accuracy: %.2f' % accuracy_score(y_test, y_hat.round()))

结果

第一种模式:10代遗传算法和10个epochs的训练第二种模式:10个epochs的训练

遗传神经网络的测试准确度为 .96标准神经网络的测试准确度为 .57遗传神经网络在相同数量的训练时期内将模型准确度提高了 0.39。